Workday Is the Friend Graph

What a16z's System of Intelligence thesis looks like when you apply it to where the data actually lives.

On May 14, three partners at a16z — Gio Ahern, Stephenie Zhang, and Alex Immerman — published a piece arguing that the most valuable layer of GTM software is no longer the database. It's the layer that reads the database, reasons across it, and acts. They called this the System of Intelligence, and they used the CRM as their example.

The argument is more true in talent than it is in sales. They picked the more competitive battleground.

Their metaphor is Facebook's friend graph and news feed. The friend graph was supposed to be undisruptable. Then the news feed showed up, and the graph became one of many inputs feeding it. The graph never went away. It just stopped being where users went.

Now apply that to talent.

The HRIS is the friend graph. Workday holds the employee record. UKG holds it. Oracle HCM holds it. The ATS is also the friend graph. Greenhouse holds the candidate record. iCIMS holds it. Lever holds it. These systems own the database, the integrations, and a decade of accumulated switching cost. They are not going anywhere. They will continue to own what they own.

But none of them are where a CHRO or a VP of Talent should be opening her laptop in the morning. Opening Workday gives you a static org chart. Opening Greenhouse gives you a backlog of unreviewed candidates. Neither tells you what will happen tomorrow, what already broke yesterday, or what to do about either.

The System of Intelligence in talent is the layer that reads across every System of Record in the company at once, reasons over them, and surfaces a prioritized list of decisions worth making today. Each item carries a quantified cost of action, a quantified cost of inaction, and a drafted workflow ready to execute across the underlying systems with one approval.

The Systems of Record are still where the data lives. The System of Intelligence is where the work happens.

What the morning looks like

a16z walked through the morning of an account executive in 2027. The AE opens her laptop to a research agent already done reading the prospect's 10-K, a dialer already coached on the recurring objections, and notes from yesterday's call already structured back into Salesforce. The CRM is still authoritative. She just doesn't go there anymore.

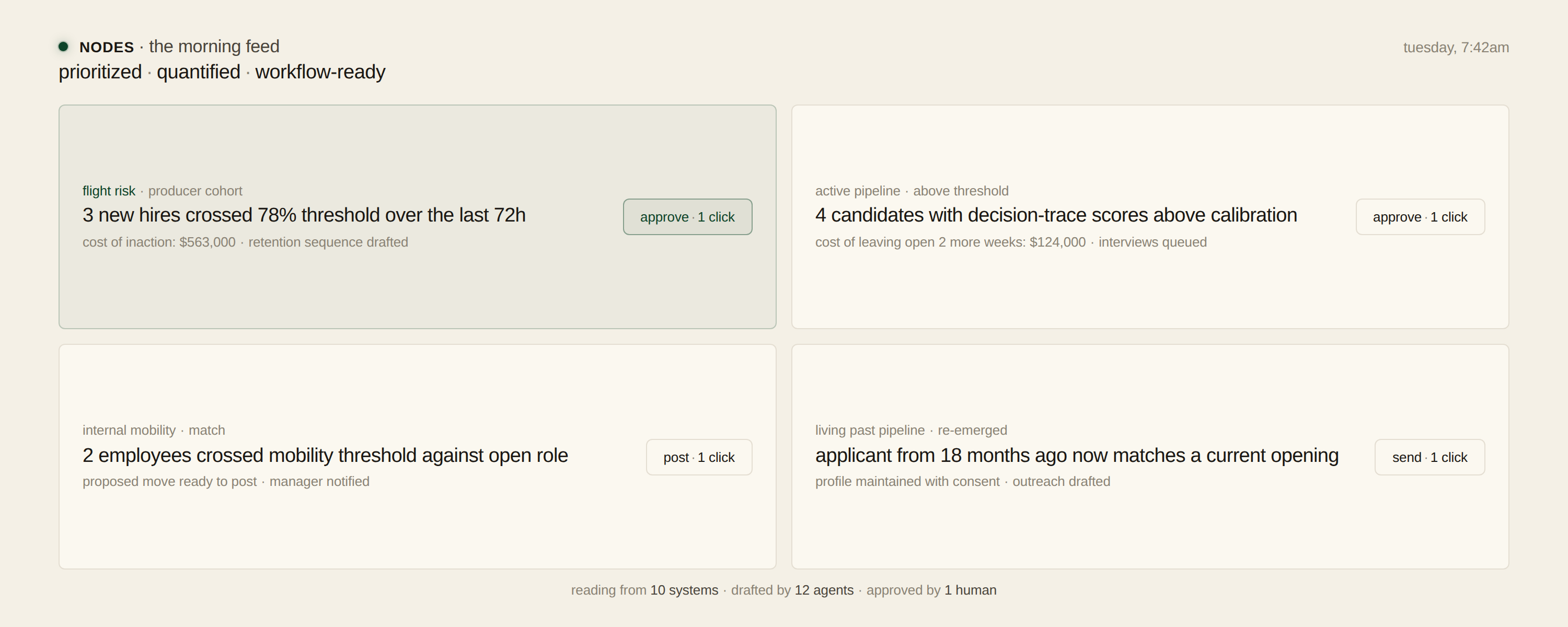

The morning of a VP of Talent in 2027 looks similar, with one difference. Each item in her feed is not a notification. Each item is a drafted workflow.

She opens her laptop to a prioritized feed.

At the top: three new hires in the producer cohort crossed a 78% flight-risk threshold over the last 72 hours. Each one shows the cost of inaction in dollars — what the carrier loses on production and replacement if this person walks — and a retention sequence drafted, ready for the manager to send. One click sends each playbook with a deadline.

Underneath: four candidates in the active pipeline have decision-trace scores above the threshold the model has calibrated against actual production. Each shows the cost of leaving the requisition open another two weeks at the current funnel rate. Approving them schedules interviews on the hiring managers' calendars, sends the rejections in Greenhouse, and updates the requisition state.

Underneath: two existing employees crossed an internal-mobility threshold against an open role two layers up. The proposed move is one click away from posting in the internal mobility portal.

Underneath that: a candidate who applied to a Senior Producer role eighteen months ago and was passed over. Her updated public record now matches a current opening better than anyone in the active pipeline. Her profile has been quietly maintained in the background, with her consent. The outreach is drafted. One click sends it.

She does not log into Workday. She does not log into Greenhouse. She does not log into Salesforce. The data is moving across all three, plus the seven other systems her company runs. The surface she interacts with is the one that turns that movement into ranked decisions, quantified costs, and pre-built workflows.

She approves what she wants to approve. She edits what she wants to edit. She sends back what doesn't look right.

This is the news feed. It is the valuable layer now.

Three is the floor

The reason talent is the better example than sales is that talent has not one System of Record but many, and they have never talked to each other.

The three primary ones are the ATS, the HRIS, and the CRM. The ATS sees candidates but never sees outcomes. The HRIS sees outcomes but never sees the candidate pipeline. The CRM holds call transcripts — the richest signal about how a producer actually performs in the field — and nobody in talent has ever read them.

Most Fortune 500s also run a Predictive Index instance, a separate assessments platform, a performance management system, a compensation database, an LMS, an engagement survey tool, an internal mobility platform, and three or four point solutions for background checks and interview scheduling. Ten to fifteen systems. Each one doing its job competently in isolation for twenty years. None of them aware of each other.

Nobody — not the recruiter, not the hiring manager, not the head of TA, not the CHRO — has ever seen the full causal chain. From resume in the ATS, to assessment scores, to interview transcripts, to revenue numbers two years later in the HRIS, with call transcripts in between explaining why the numbers went the way they did. The chain has not been visible because no human has the time to assemble it.

An agent does.

This is the orchestration problem a16z described, except in talent it's a step harder than in sales. In sales, the systems mostly sit inside the same buyer's wallet and the data is roughly the same kind of data. In talent, the Systems of Record are owned by ten different vendors with ten different schemas, ten different access regimes, and ten different procurement reviews. The only thing that has ever connected them is a person who performed the job for a few years, and when that tenure ends the connection disappears with them.

The agents do not wait

Every AI hiring tool shipped between 2023 and 2025 is reactive. A recruiter types a query into a chat interface. The agent answers. The recruiter decides what to do with the answer. Nothing happens until the recruiter does something.

This is not a System of Intelligence. This is a search engine with a costume.

The System of Intelligence does the work in the other direction. The agents are continuously analyzing across systems, looking for things that should be brought to a human's attention. Flight-risk thresholds crossed. Candidates whose updated record now matches a different open role. Open requisitions whose fill probability has dropped below the historical baseline. Hiring managers whose interview-to-offer ratio is drifting outside the calibrated range. Internal mobility candidates whose stated preferences now align with an open posting.

When the agents identify a gap, they do not log it for human review. They brainstorm the response, draft the workflow — the outreach, the retention conversation, the reranking of the pipeline, the rejection email — and present it for approval.

At runtime, the customer-facing surface is twelve agents, each owning a piece of the work: screening, sourcing, interviewing, ramp, retention, internal mobility, succession, manager intelligence, candidate experience, requisition operations, EEOC monitoring, and decision-trace logging. An orchestrator coordinates them. Twenty-five layers of backend agents — feature engineering, embedding generation, bias monitoring, adversarial review, model evaluation, schema reconciliation across the customer's many Systems of Record — support the twelve.

The recruiter does not see the orchestration. The recruiter sees a feed. The work happens behind the feed.

When every applicant looks the same

Every open role in 2026 receives thousands of applications, and most of the resumes were written by the same three models. Applicants with very different actual abilities arrive looking remarkably similar on paper. Keyword density has converged. Bullet structure has converged. The signal-to-noise ratio in the average applicant pool has collapsed in the last twenty-four months, and it will keep collapsing as AI continues displacing the kinds of jobs whose holders apply for the kinds of jobs still hiring.

From the recruiter's seat: the time available to evaluate each candidate has not grown, the volume has multiplied by ten, and the differential signal on the resume has flattened. The standard response — tighter keyword filters, mandatory experience floors, automated rejection of anyone who doesn't tick a box — eliminates the same top performers the screening filters were already eliminating before AI broke the resume.

The System of Intelligence reads the resume against the company's actual top-performer pattern, not its mental model of one. For every candidate above a calibrated threshold, an AI screening agent runs a structured first-round interview generated from the carrier's own historical interview-to-outcome data. The interviews are asynchronous. Candidates take them on their schedule. Every candidate above the threshold gets the same depth of evaluation. The recruiter spends time on the candidates the model has identified as actual matches, not on the candidates the keyword filter happened to surface.

Everyone gets a fair first look. The recruiter still makes the final call. The funnel widens at the top and narrows on signal that actually predicts.

The Performance Genome

a16z made one observation in their piece that deserves its own paragraph. Every company bleeds institutional knowledge when employees turn over. A System of Intelligence that has been ingesting how that person worked, what they said, what they wrote, what they decided, can hand the whole context over to a successor. They called it institutional memory you can ship.

We call it the Performance Genome.

When a top producer at a Fortune 500 insurance carrier with eight years of accumulated context exits the company, almost everything she knew goes with her. The handoff is a half-hour conversation, an org chart, and a folder of half-organized documents. The next person spends the first twelve months reconstructing what the predecessor already knew. By the time the new hire is fully productive, the cost of the gap has shown up in revenue.

The Performance Genome is the full behavioral signature of the company's top performers, extracted continuously from the systems they work inside — how they triage their pipeline, what they say on calls, how they sequence accounts, which signals they act on, which they ignore. The Genome is not a document. It is a model.

A new hire entering the role does not get a binder. She gets a ramp agent that knows what the top performers in her exact role did in her exact situations. At 11pm on a Tuesday, when she is rewriting her cold-call opening and not sure who to ask, the ramp agent has the answer. When her first prospect raises an objection she has never heard, the ramp agent has the seven historical responses from the top three producers in the territory, ranked by which produced the best outcomes. None of this requires her to ask. The agent has been watching the work in real time and surfacing the relevant pattern at the moment of need.

At one Fortune 500 carrier, ramp on the producer cohort was 8 to 12 months. With the Performance Genome and the ramp agent in production, it compressed to six weeks. Each 30-day reduction in ramp on that cohort is worth $1,357 per agent per year in incremental revenue. At a 2,000-hire annual volume, the difference is between the workforce and a meaningfully better workforce.

The asset that compounds, quarter over quarter, is the customer's own Genome. The longer the system runs, the more accurate the pattern. The more accurate the pattern, the faster the ramp. The faster the ramp, the larger the production lift on the cohort. The compounding belongs to the customer.

Where the thesis breaks

a16z's piece is precise about what the System of Intelligence does and approximate about how it should be built. The approximation is where the thesis hits the wall of regulated enterprise.

The assumed architecture is the one most AI-native GTM startups have shipped: a multi-tenant cloud platform that ingests data from the customer's Systems of Record via API, reasons over it in a shared inference layer, and writes back. For sales motion that is mostly fine. The data is operational and the regulatory exposure is limited. For talent it is a non-starter at any Fortune 500.

Performance data is sensitive. Compensation data is sensitive. PII on candidates and employees is sensitive. EEOC adverse-impact data, NYC AEDT and analogous AI-hiring disclosure data, SOX-controlled records on financial services employees, HIPAA-relevant data on healthcare workers — every category of data the talent System of Intelligence has to read to do its job is regulated under at least one framework that prohibits sending it to a third-party API. The procurement review at every regulated carrier and bank rejects the architecture on principle, before the technical evaluation begins.

We have seen this six times in eighteen months at a single carrier. Six AI hiring vendors rejected before reaching production — each technically capable, each architecturally incompatible. Not because the products were bad. Because the data couldn't leave.

The System of Intelligence in talent has to deploy in the customer's environment. Single-tenant. VPC-resident. Model weights owned by the customer. No data egress, ever.

The architecture is the product.

Any vendor that did not start with this constraint is now in the position of rebuilding from the floor up to meet it, and most of them will not survive the rebuild.

This is the gap in the thesis as written. The thesis is correct that the value layer moves up the stack. It is silent on where that layer runs. For non-regulated GTM the question barely matters. For regulated enterprise — which is most of the Fortune 500, including every carrier, every bank, every hospital system — the question is the only one that matters.

The proof

The argument so far is theoretical. The reason to take it seriously is that the production data already exists.

At a Fortune 500 insurance carrier, we deployed the System of Intelligence inside the carrier's VPC, integrated with their ATS, HRIS, and CRM, and ran it against the producer cohort. Over four years, the data covers 10,765 agents.

We tested every screening filter the carrier had been using — keywords, years of industry experience, prior employer, candidate assessments. Eight thousand one hundred and eighty-one candidate keywords against four years of post-hire production. After Bonferroni correction for multiple comparisons, none predicted sustained performance. Thirty were anti-predictive — correlated with lower output. The industry-experience filter, which the carrier had relied on for two decades, eliminated 80% of their eventual top performers from the pipeline. The cumulative funnel eliminated 98% of them.

The intelligence layer, trained on the carrier's own outcome data, scored the same candidates differently. Hire rate moved from 14.0% to 27.7% across 6,053 hires. Time-to-hire compressed by 47 days. Ramp-to-production compressed from 8 to 12 months down to 6 weeks. Retention on the cohort moved from 64% to 91% in the first year. The CFO at the carrier validated $1.58 million in net savings in the first quarter alone.

These are not projections. They are what already happened. The methodology, including the adversarial review protocol and the decision-trace logging, is published on arXiv: Decision Traces. The paper is the documentation for the thesis. The customer's production environment is the proof.

What is being built

The next decade of enterprise talent software is being built at this layer. Not at the ATS layer, where the database vendors will continue to compete on integrations and pricing. Not at the HRIS layer, where Workday will continue to be Workday. At the layer above both, where an AI-native System of Intelligence reads from the existing Systems of Record, reasons across them, and surfaces decisions worth making — with quantified costs and pre-built workflows.

The companies that win this layer share three traits. One: they were architected from day one to deploy inside the customer's environment, because that is the only architecture procurement will approve. Two: they own no data, because the data stays with the customer, and they build a moat out of the customer's accumulating intelligence rather than the customer's accumulating data. Three: they treat the existing Systems of Record as infrastructure, not as competition.

a16z's piece is the framework. This is the implementation.

Saad Bin Shafiq is the founder of Nodes, the AI talent intelligence platform deployed in customer VPCs at Fortune 500 insurance, financial services, and regulated enterprises.